Now hiring!

You're almost at the finish line. Development is wrapping up, confidence is high, and one thing stands between you and deployment: an audit. You think you're ready. But are you?Anybody can be ready for an audit. Technically, the only real requirement is having code to review. So what's the real benefit behind putting effort into preparation for an audit?

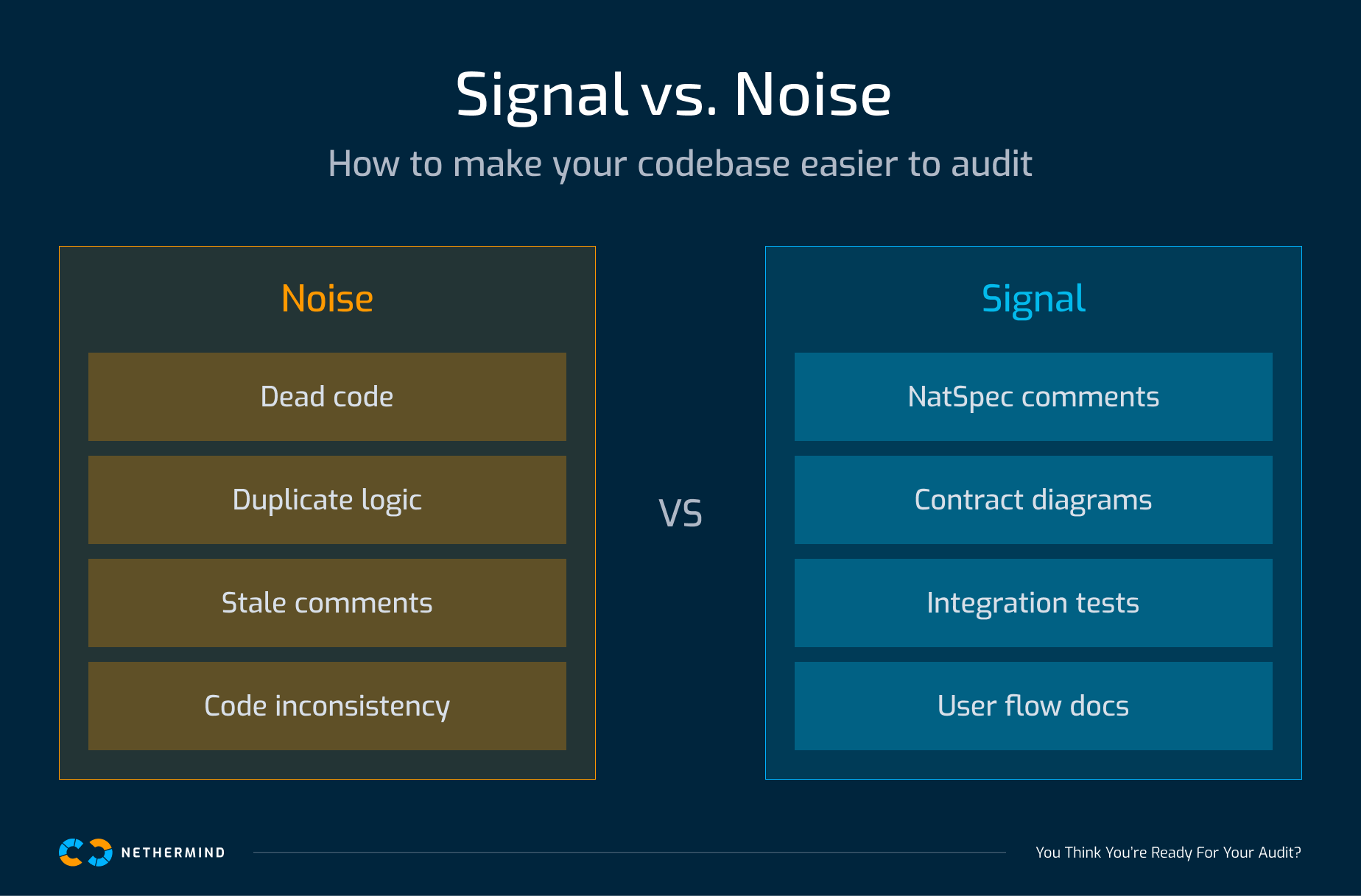

Signal and noise.

Every item you remove from the left column and add to the right improves the ratio, and how effectively auditor time is spent.

At Nethermind Security, we've spent years reviewing code across all kinds of teams. As auditors, we need to build a deep understanding of your system to uncover issues and assess its reliability. That process starts with building context. The less friction we encounter navigating your codebase, the faster we can move past surface-level understanding and into deeper analysis. The better your signal-to-noise ratio, the better the result for you.

With that in mind, here are some ways to better prepare for an audit.

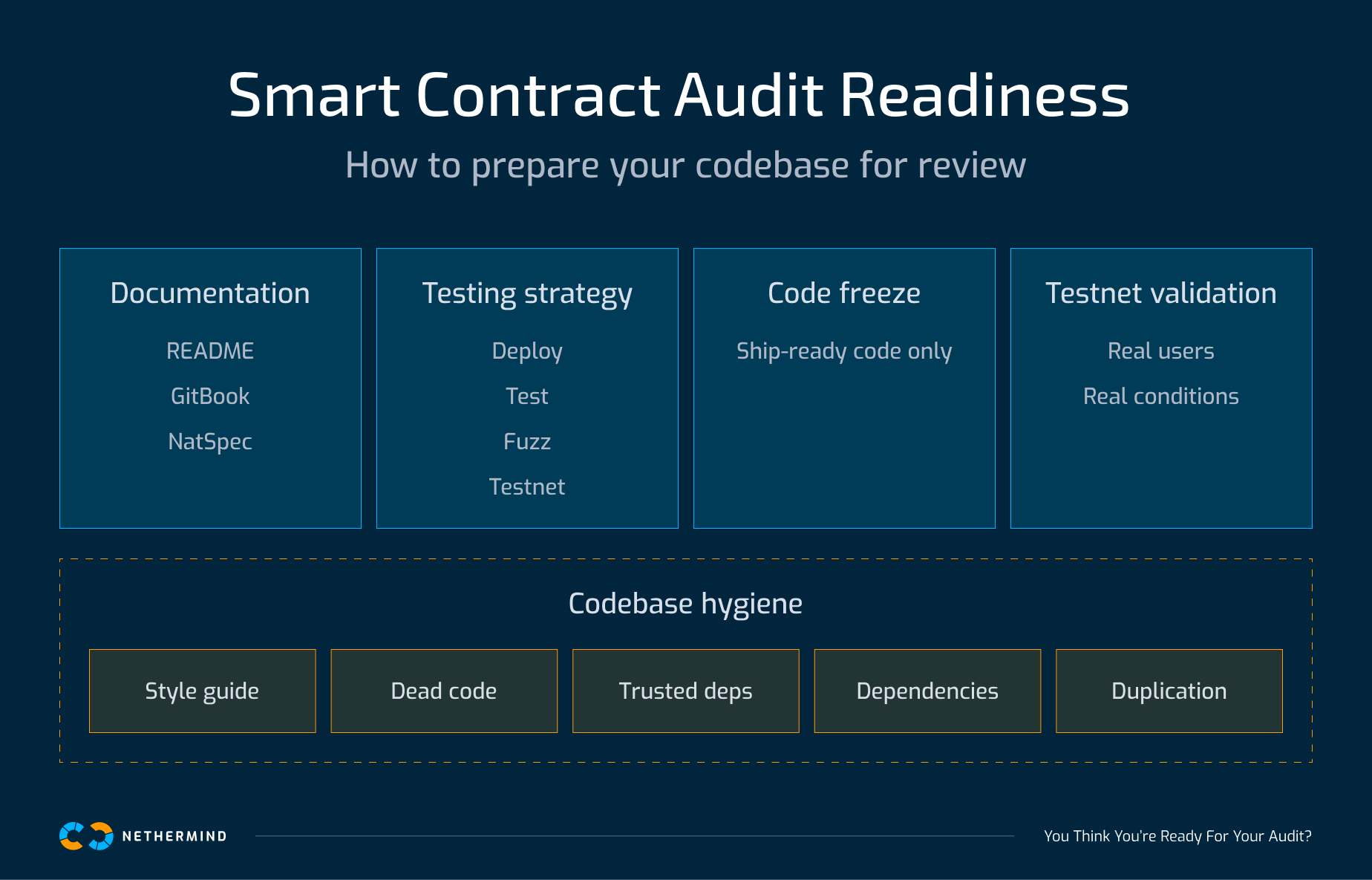

The core areas determine whether an audit can go deep. The hygiene practices determine how cleanly it gets there.

Documentation can take many forms, from GitBooks to README files, but it can extend much further beyond that. Detailed explanations on how to set up the environment, compile, and run tests are very helpful. Hidden dependencies are common, so running your setup from a fresh environment helps to catch them early. Diagrams explaining how the different contracts are expected to interact with each other help to give a high-level overview of the protocol. We even consider comments in code to be documentation, with well-written NatSpec comments improving clarity and helping provide context when reading functions.

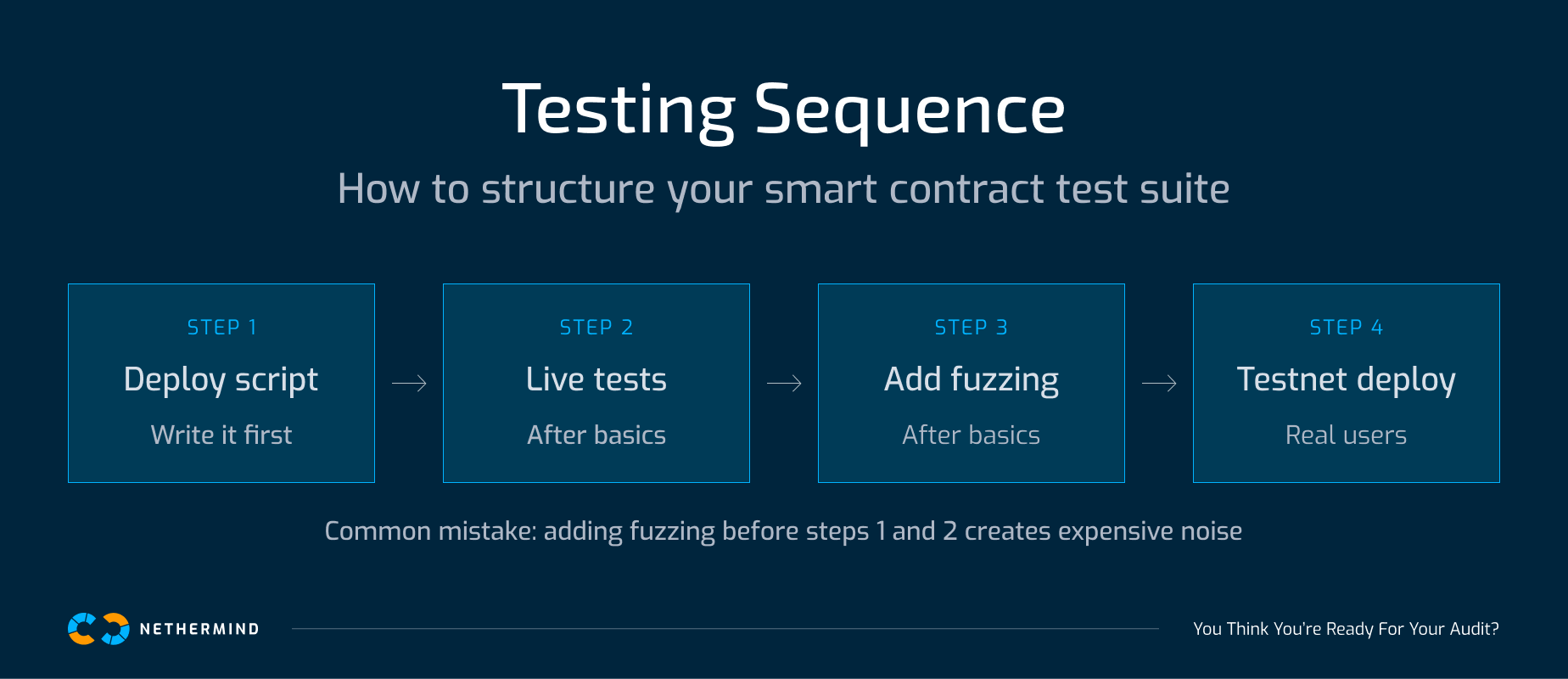

A test suite that runs for ten minutes is not automatically a good test suite. This is especially common when fuzzing and invariant testing are added before basic functionality is validated. We've reviewed protocols with thousands of fuzz tests and full invariant coverage that couldn't execute a basic deposit or withdrawal. The tests looked thorough. The protocol didn't work.

Fuzzing and invariant testing are useful, but they are often added too early. Before using them, get the fundamentals right. Start by writing your deployment script and running tests against it, not against a mocked or simplified setup. The gap between local testing and real deployment is where failures often appear.

From there, ensure you can execute core functionality end-to-end. Call every function through realistic flows with minimal mocking. If something breaks here, no amount of fuzzing will surface it, because fuzz tests don't establish that your protocol works. They explore input variation on flows you've already confirmed work.

Next, integration testing. If your protocol spans multiple contracts, test them working together as they would in production. Isolated unit tests can pass cleanly while the system fails the moment components interact.

Once those foundations are solid, add fuzzing. It's genuinely useful for covering input variety on functions you know already work. Without that foundation, fuzzing becomes expensive noise that hides simple failures.

A simple sequence:

If these steps are in place, your test suite reflects how the protocol actually behaves.

When submitting code for an audit, it should be totally feature-complete and what you’d deploy to production. Introducing changes during the audit can have a big impact on the audit process; it can affect our flow and focus in deep-dive areas where we now have to study newly added or modified features. Even changes in one area can affect how other parts of the code work, requiring a context refresh in multiple areas even if just one part was changed. Changes introduced after the audit are even riskier, as adding unaudited code can potentially introduce issues where the previous implementation was secure. Rather than having the mindset of “development is close, let’s get an audit done while we finish up” you should instead think, “this is the code we would ship and deploy to mainnet right this moment.”

Real-world scenarios can differ from what you find in structured test suites. Deploying your protocol on a testnet and having real users interact with it, sometimes in flows or patterns you don’t expect, is a great way to test your contracts against real-world conditions. Perhaps it may uncover a small unexpected behavior that you can’t identify in tests but only becomes noticeable after it compounds from a week of user interactions. Compared to the other three points, running a testnet protocol and getting real users to engage with it takes significantly more effort, but the benefits aren’t just security-related. You can find improvements in your frontend, backend, smart contracts, and user experience as a whole by running a testnet deployment.

Following a consistent layout for your code across all files improves readability and helps to navigate through it much quicker. For Solidity we recommend the official style guide and particularly the ordering of declarations like imports, structs, events, modifiers, constructors, and functions based on visibility.

During development, things can change, perhaps a feature is removed or modified, or even an entire code refactor has happened. You should keep an eye out for remnants of code from previous implementations that are no longer needed. These could be imports/constants/functions that are no longer needed, old NatSpec argument comments for a function which has been updated, or old comments which explain logic that has since been deleted.

Where possible, you should try to import code from a trusted source rather than copy or implement it yourself. This could be an example as simple as an ERC20 interface file which you’ve copy-pasted into your interfaces directory, or something more complex like a governance voting mechanism. In both of these cases, rather than copying and pasting you could have imported the code from the Openzeppelin Contracts Repo instead. Not only does your contracts directory become cleaner, but now you can easily update your dependencies when new versions release. Speaking of which, that brings up the next point.

The development process can take a while, months up to years in some cases. Over that time some dependencies you import can have significant updates, especially so if they’re security-related. We highly recommend checking all your dependencies and ensuring they are up to date, and then lock them at a particular version. Locking your dependencies at a specific version ensures that your protocol will behave exactly the same, instead of potentially changing as new updates arrive.

You may have some functions that complete similar tasks, with almost copy-pasted logic except for a few small tweaks. In cases like these you should aim to use internal functions to remove that repeated code where possible. Not only does it reduce line count but it helps with maintainability too, since you only need to change that one internal function rather than modify the same logic in three or four different places. As an added benefit, it also reduces your line count and makes it more readable too.

If you apply all these points before your audit begins, you will be in a much better position when the code review starts. The signal-to-noise ratio of your codebase doesn't just affect how deep auditors go. It affects what they find. A noisy codebase doesn't produce a worse report because auditors miss things. It produces one because their time went to the wrong places. Every improvement you make, whether removing dead code, tightening your documentation, or validating on testnet, is time handed back to the people looking for real issues.